Using MCPs with evals

When I joined Fractional AI, one of the first lessons I learned was that evaluations drive every major decision in our projects, from first prototype through post-launch improvement. In our initial customer meetings, we position eval-driven development as our core methodology and maintain that commitment throughout the entire build. It's how we demonstrate rigor: everything has a quantifiable evaluation we use to justify changes, calibrate performance, and learn what's working.

As you can imagine, I was so excited when my first project reached the key milestone of establishing an initial evaluations suite. Unfortunately, excitement turned to confusion, and frankly, dismay, when I opened up the UI for the first time.

I couldn't tell what numbers mattered, what the baseline was, or what I was even supposed to take away at first glance. Everything felt like it was competing for attention, yet nothing jumped out as actionable.

As a Forward Deployed Product Manager, I own the customer experience, which includes keeping our clients apprised of improvements and progress so they could react, ask questions, and discuss relative tradeoffs of potential improvements. If I couldn’t figure out where we were with our evals, how could I deliver on our promise to the customer?

In the early days, I leaned heavily on our engineering team trying to parse out what I was seeing in the UI, sending screenshots and asking which values were the right ones to present, or the nuances between two runs. I was spending valuable time verifying I had the right values instead of thinking about what they meant for our build.

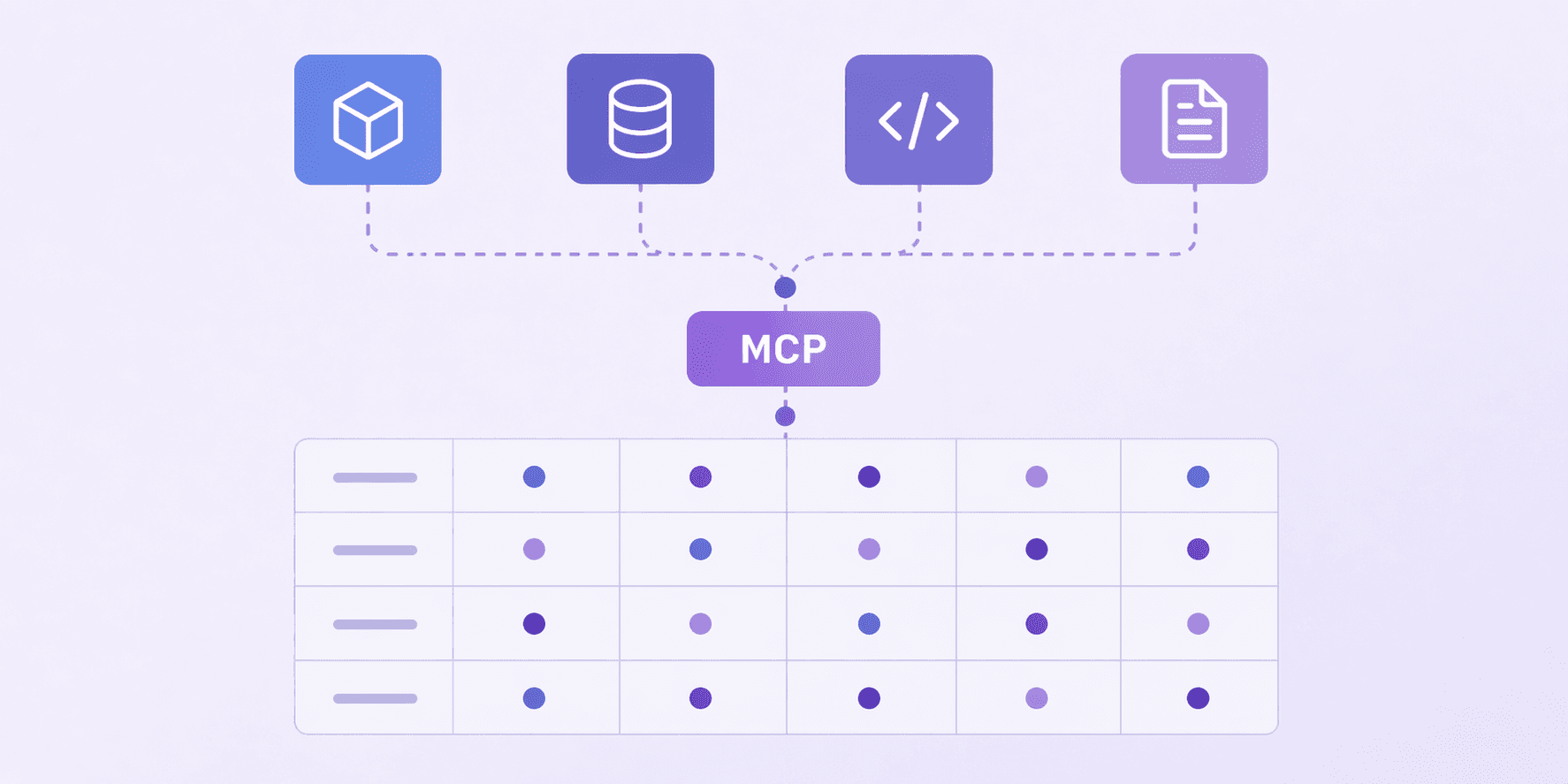

Hooray for the W&B MCP!

When our engineer told me about the W&B MCP, it truly changed my workflow. Instead of navigating the interface and asking myself or the team "is this the right field?", I could query directly in natural language: "Show me the color scores over time.”

Better still, follow up Q&A was efficient, keeping my momentum as I investigated trends and dove into the data.

I joined Fractional AI to learn what it means to be an AI PM, and the majority of that includes building GenAI solutions. But we also emphasize AI-native development: using AI to build products, not just building AI products. This was a tangible example of what that looks like for a PM and the ways AI can enable our work. I still asked the questions, interpreted results, and crafted the narrative for the customers, but I no longer experienced friction in accessing the data. I was able to spend that saved time thinking about the meaning behind the numbers and how to drive the most value for our customer. The shift from "where is this data?" to "what does this data mean?" is exactly the kind of efficiency gain that pays back directly into customer outcomes.