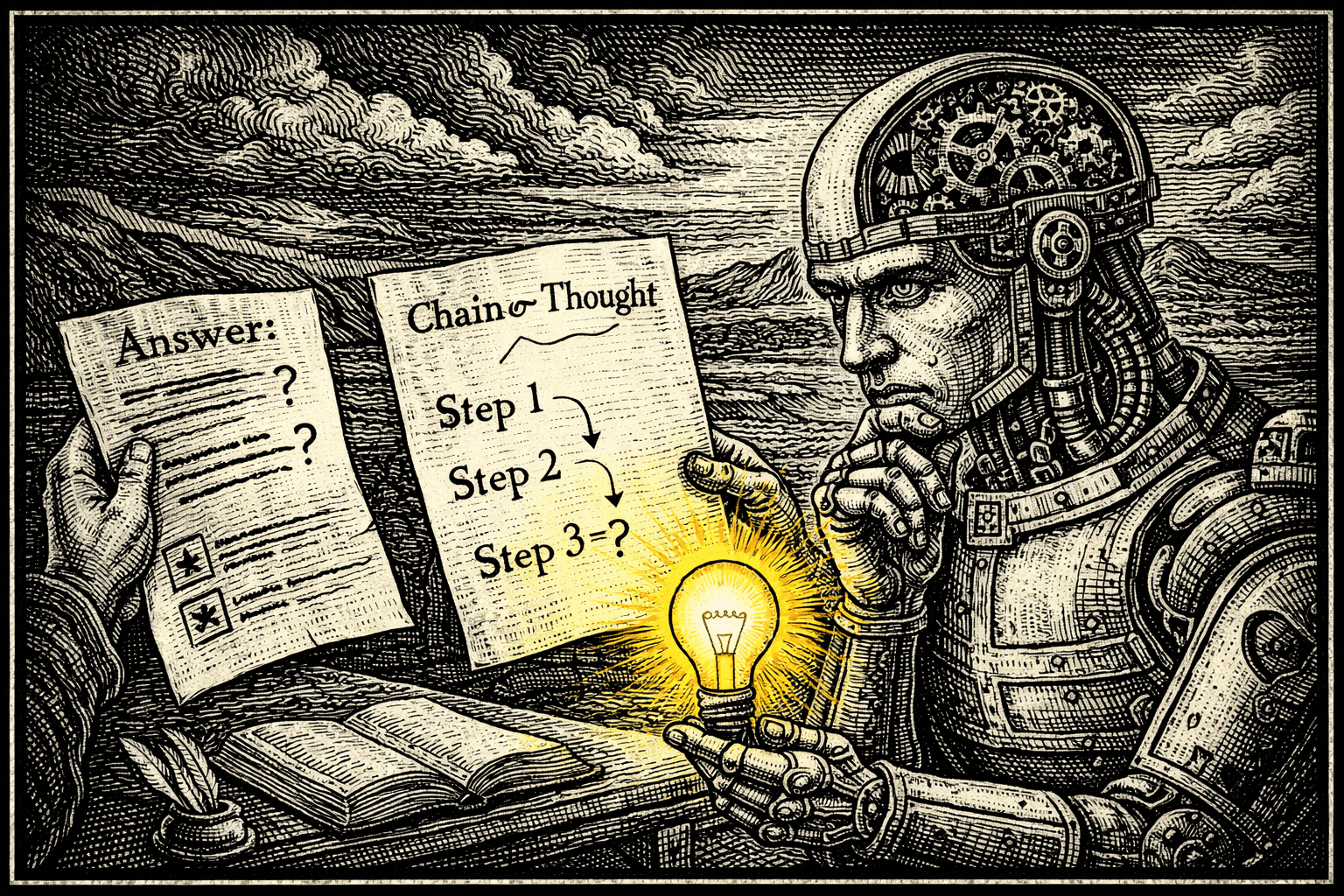

The Virtues of Showing Your Work: Do LLM Explanations Actually Help?

If you've spent any time prompting large language models, you've probably heard of Chain of Thought (CoT) reasoning—the technique of asking an LLM to "show its work" by generating intermediate reasoning steps before arriving at an answer. First popularized by [Wei et al. 2022], CoT has become a go-to method for improving performance on mathematical and logical problems. The intuition is appealing: by breaking down complex problems into steps, models can "walk" to the correct answer rather than attempting a risky zero-shot "jump." But does this benefit extend beyond math problems? And perhaps more importantly, can we actually trust the explanations these models generate?

Out of curiosity, I tested two models (gpt-5-mini and gpt-5 with low reasoning) on two very different classification tasks: hierarchical product classification from customer reviews, and fake news detection. For each task, I compared three prompting strategies: asking for just the label, asking for the label followed by an explanation, and asking for an explanation followed by the label. For hierarchical product classification, we give the model a choice of categories to assign at levels 1, 2, and 3 - higher levels are more granular, representing subcategories. For fake news, we simply measure a binary decision - real or fake news.

The results were mixed. On product classification, the explain-then-label approach showed modest improvements—gpt-5/low increased slightly from 58% to nearly 60% accuracy across all classification levels. The results on fake news detection saw some small gains with gpt-5-mini, improving from 61% to 64.5% when asked to explain first. Interestingly, however, we actually see worse performance on the fake news dataset with the more advanced CoT model of gpt-5/low. More generally, we don’t see very significant gains from explanations overall - asking for an explanation seems to provide very little overall impact!

| Model | Level 1 Acc | Level 2 Acc | Level 3 Acc | All Level Acc | |

|---|---|---|---|---|---|

| Just label | gpt-5-mini | 81.33% | 60.67% | 56.33% | 37.33% |

| Label/explanation | gpt-5-mini | 82.00% | 60.33% | 57.67% | 37.67% |

| Just label | gpt-5/low | 88.67% | 79.67% | 69.00% | 58.00% |

| Label/explanation | gpt-5/low | 91.33% | 80.33% | 69.33% | 59.67% |

Figure 1. Results of using gpt-5-mini and gpt-5 with low reasoning to predict product categories at three levels for the Kaggle product review dataset, with and without explanations for labels.

| Model | Accuracy | |

|---|---|---|

| Just label | gpt-5-mini | 61% |

| Label/explain | gpt-5-mini | 63% |

| Just label | gpt-5/low | 58% |

| Explain/label | gpt-5/low | 58.5% |

Figure 2. Results of using gpt-5-mini and gpt-5 with low reasoning to predict whether a news story represents real or fake news, with and without explanation provided first.

The broader research literature, similarly, paints a more complicated picture. While [Lee et al. 2024] found that explanations improved scoring on science assessments, a comprehensive study by [Wu et al. 2025] tested 95 advanced LLMs across 87 real-world clinical text tasks in 9 languages—and found that CoT actually hurt performance in 86.3% of models tested. The emerging pattern suggests that CoT helps most when problems require explicit multi-step computation or symbolic manipulation, and can actually backfire when tasks rely more on intuition, pattern matching, or holistic assessment of varied evidence. Sometimes, overthinking introduces errors. For certain problems, where an LLM may have already been trained on relevant information, the model has already memorized the answer, and asking for an initial explanation merely risks muddying the context.

The more troubling question is whether we should trust LLM explanations at all. A striking finding from [Barez et al 2025] notes that nearly 25% of recent arXiv papers incorporating CoT treat it as a technique for model interpretability—essentially assuming that explanations reveal why a model made a decision.

But there's growing evidence this assumption is flawed. [Dutta et al 2024] demonstrated that LLMs often have multiple reasoning pathways that can lead to the same answer, meaning the explanation you see may not reflect the actual computational process. Even more concerning, [Chen et al 2025] showed that simply providing a different answer as a "hint" causes models to change both their answer and generate an entirely new explanation to justify it—suggesting explanations are often post-hoc rationalizations rather than faithful accounts of reasoning.

Figure 3. Examples of explanations from Claude for why the same article - a Reuters article on budget negotiations in the U.S. Congress from 2017 - is either real or fake news. Claude presents points for either view in its explanation.

So what should practitioners take away from all this? Explanations serve different purposes depending on your goal.

If you want to convince a user that a classification decision is reasonable—to build trust and provide context—explanations can be valuable.

Explanations are also genuinely useful for problems that follow explicit logical steps.

But if you're trying to "understand" why a model made a particular choice, or using explanations to guide prompt engineering, then you may be building on shaky ground.

The explanation you receive is best thought of as a plausible story, not a window into the model's actual decision-making process. Use CoT strategically, test whether it actually improves your specific task, and resist the temptation to over-interpret the reasoning you get back.